Executive summary

AI agents and agentic AI aren’t synonyms, and confusing them costs organizations real money. Although there’s still some debate about the industry-wide definition, in Cloudflight, we describe an AI agent as a software component designed to handle a specific, well-defined task. Agentic AI, on the other hand, describes a broader system architecture, with the capacity for autonomous goal-setting and multi-step planning, which results in self-directed action.

The distinction matters now more than ever. Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027, largely because organizations don’t really understand what they’re purchasing.

Key facts to keep in mind:

- AI agents are task-specific components; agentic AI is the orchestration architecture that puts them to work

- In 2025, fewer than 5% of enterprise applications included task-specific AI agents. For 2026, Gartner expects this figure to reach 40%.

- 23% of organizations are already scaling agentic systems; most are doing so in only one or two functions.

- Gartner explicitly flags “agent washing,” meaning vendors relabeling chatbots and robotic process automation (RPA) tools as AI agents, as a primary source of failed projects.

Bottom line: getting this terminology right is a prerequisite for making smart technology investments.

Why this terminology became so confusing

The terms arrived together and evolved in public. Andrew Ng helped popularize the word “agentic” in a 2024 lecture, right as large language models were becoming capable enough to support businesses beyond simple generative tasks. Vendors rushed to attach the word “agent” to every product in their catalog. By early 2025, the average enterprise AI pitch deck contained both terms, often interchangeably.

This linguistic collision has real consequences. Gartner analysts describe most agentic AI projects right now as “early-stage experiments or proof of concepts that are mostly driven by hype and are often misapplied.” Organizations end up expecting a single-task automation tool to solve complex, cross-functional problems it was never designed for. That leads to disconnected automations. Their logic is inconsistent, and their budget often overruns.

Luckily, you don’t need a computer science degree to understand the fundamental differences between the two terms. All it takes is to understand two distinct concepts: what an entity does versus what capability a system possesses.

What is an AI agent, and what it isn’t

Formally speaking, an AI agent is a computational system that maps observations of an environment to actions according to a goal-directed policy defined by its designer or training process. This might sound confusing, so let’s put it in more down-to-earth terms: it’s software designed to perceive input from its environment, reason about that input, and take action toward a defined goal. The critical word is defined. Someone specified what this agent is supposed to do, and the agent executes within those parameters.

The bounded nature of AI agents

AI agents operate inside explicit boundaries set by their design and permissions. For instance, a customer service agent can:

- look up order history.

- process refunds.

- route tickets.

It can’t decide to redesign the entire support workflow because it noticed a pattern in complaints. That decision falls outside its scope.

This doesn’t make agents weak or limited in a pejorative sense. A well-designed agent handling invoice validation, for example, can be extraordinarily reliable precisely because its scope is narrow.

A contained scope greatly reduces the risk of falling into the usual AI pitfalls. An AI agent doesn’t improvise or hallucinate instructions. It doesn’t decide on its own that it should also start handling vendor onboarding because that seemed related.

What actually qualifies as an AI agent today

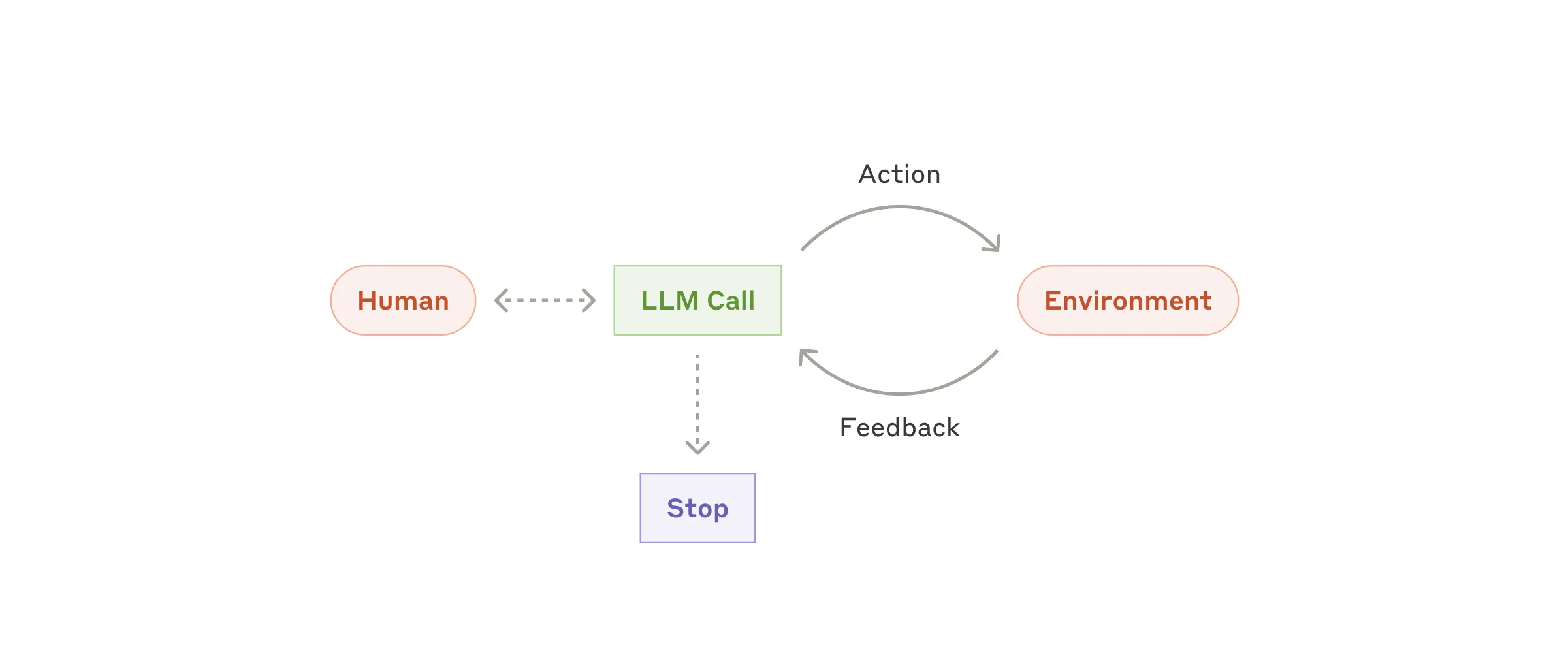

The category is broader than many people assume. According to Anthropic’s guidance on building effective agents, an agent is a system “where LLMs dynamically direct their own processes and tool usage, maintaining control over how they accomplish tasks.” This is different from an LLM simply following a predefined script.

Image source: Anthropic

Practical examples of genuine AI agents include a scheduling assistant that checks calendar availability, resolves conflicts, and proposes alternatives, or a code review agent that reads a pull request to identify issues against a style guide and lists them out in comments. Both perceive an environment to reason and act. Both are bounded by what they were designed to handle.

What doesn’t qualify as an AI agent is, for instance, a traditional chatbot that picks from a menu of pre-written responses. A form-filling macro also doesn’t make the cut because it doesn’t reason. Dangerously, these are the systems that often get relabeled as AI agents in sales pitches.