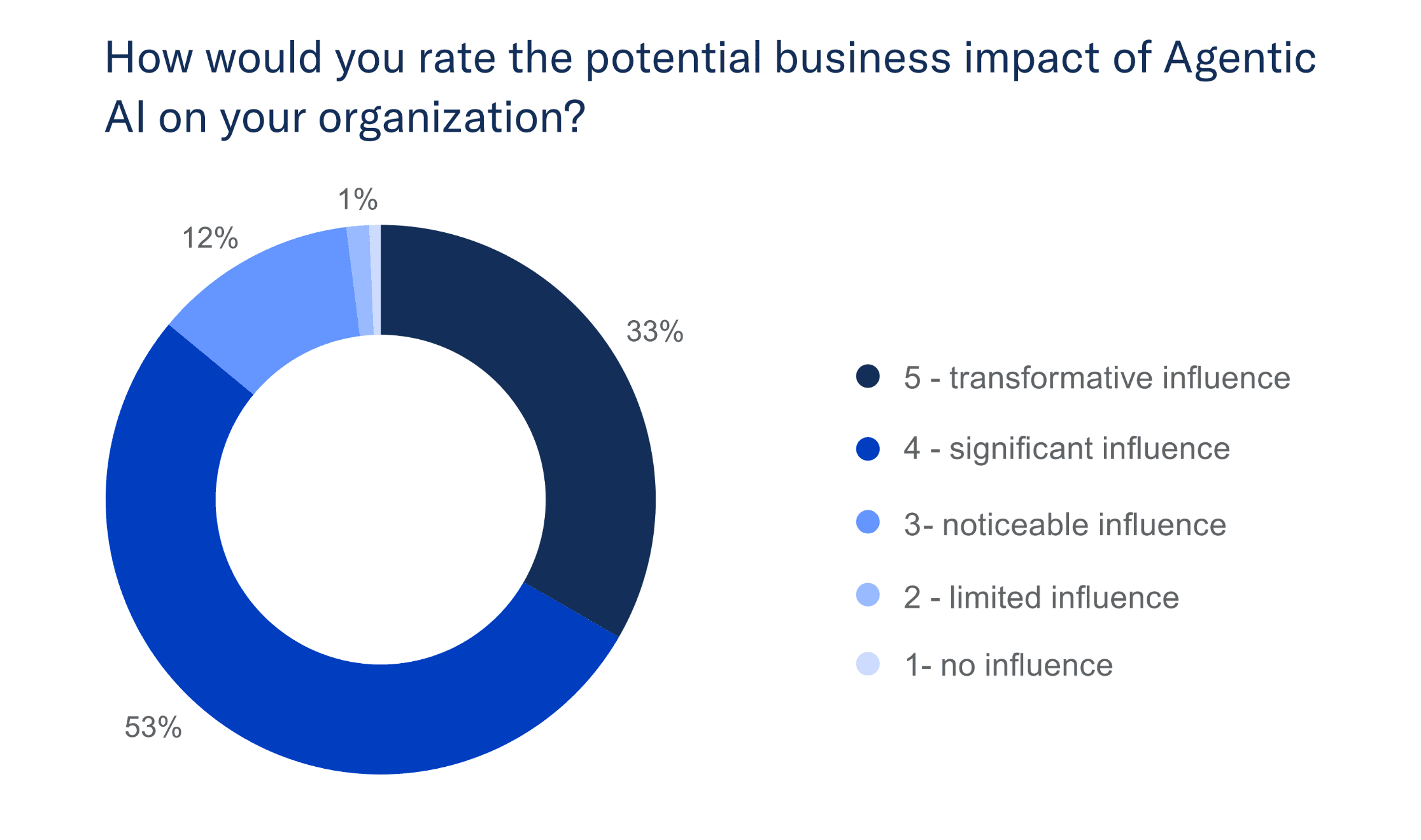

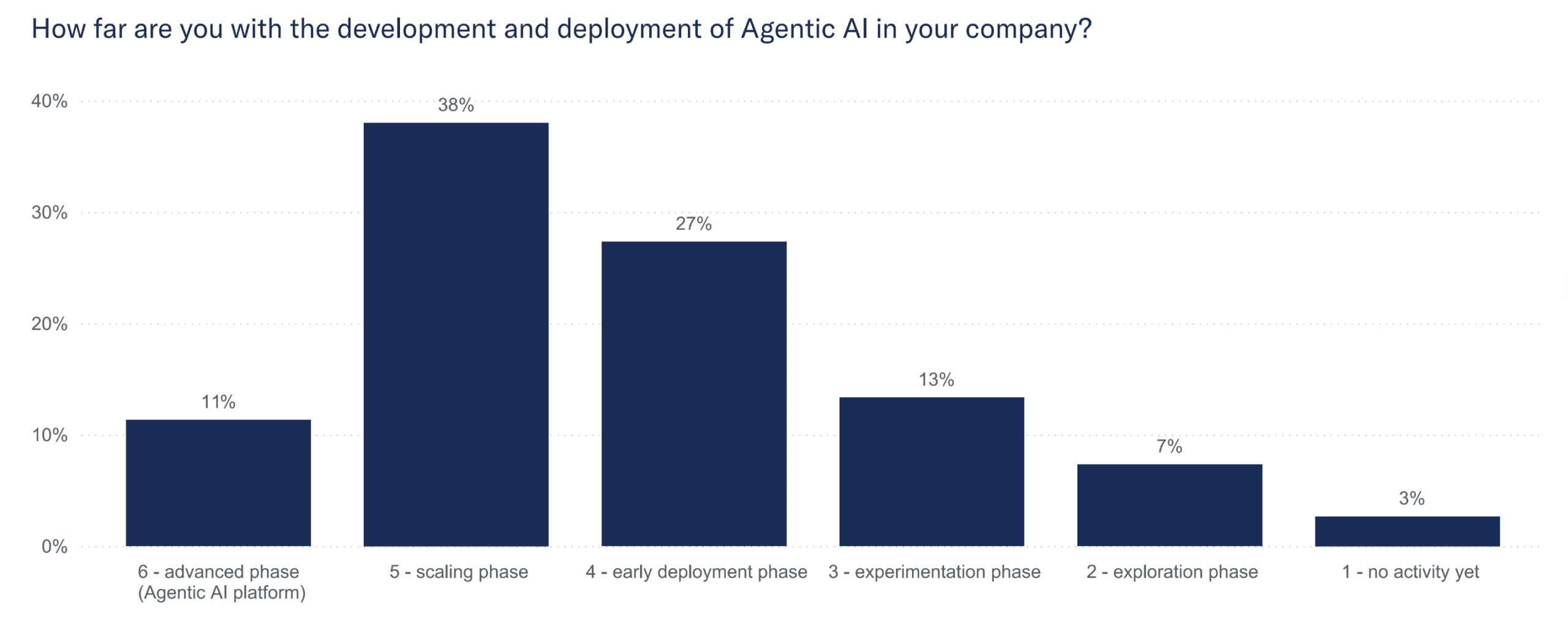

Eighty-six percent of German enterprises believe Agentic AI will significantly impact their business, yet only 11% have moved beyond pilot projects to reach advanced deployment.

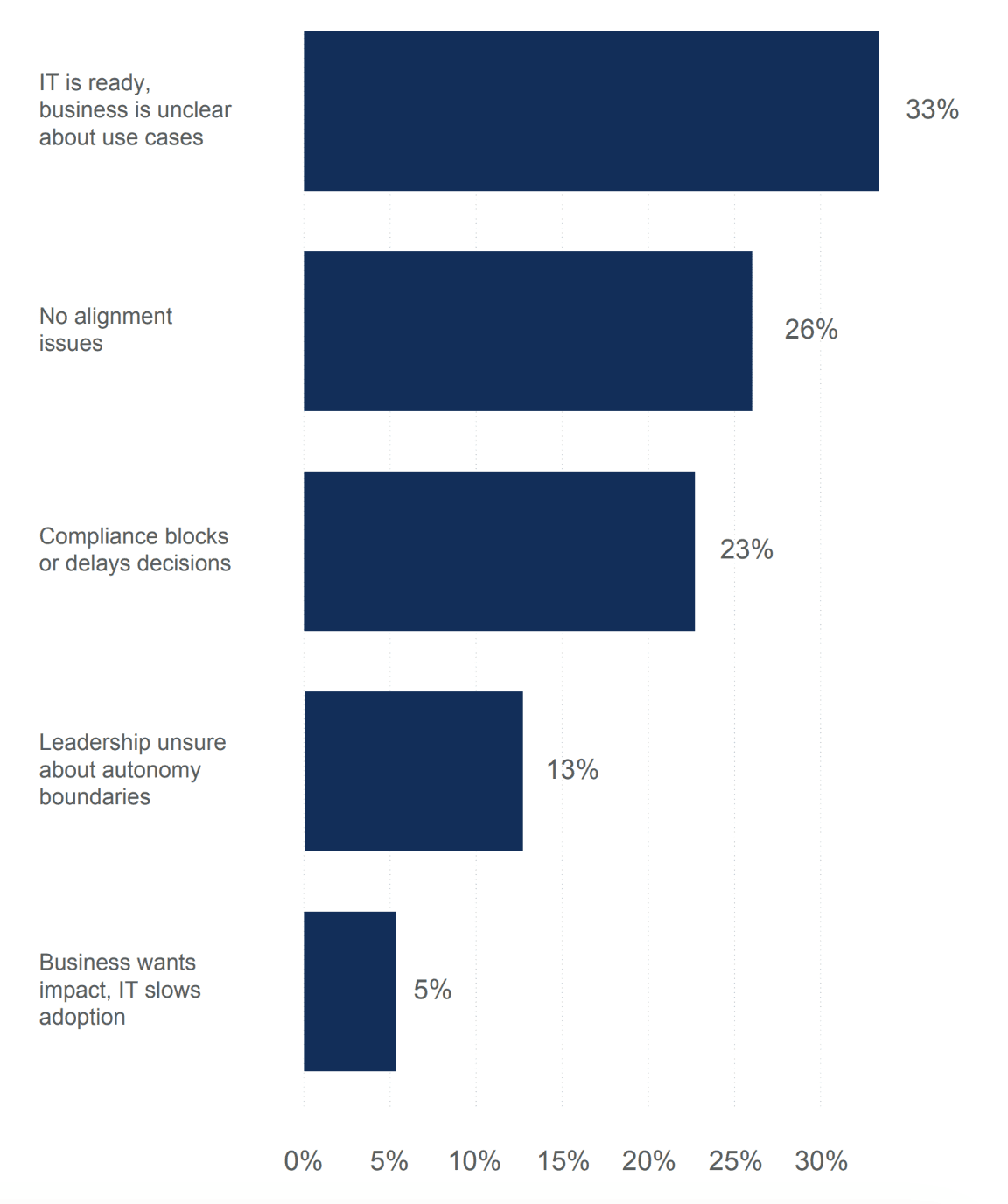

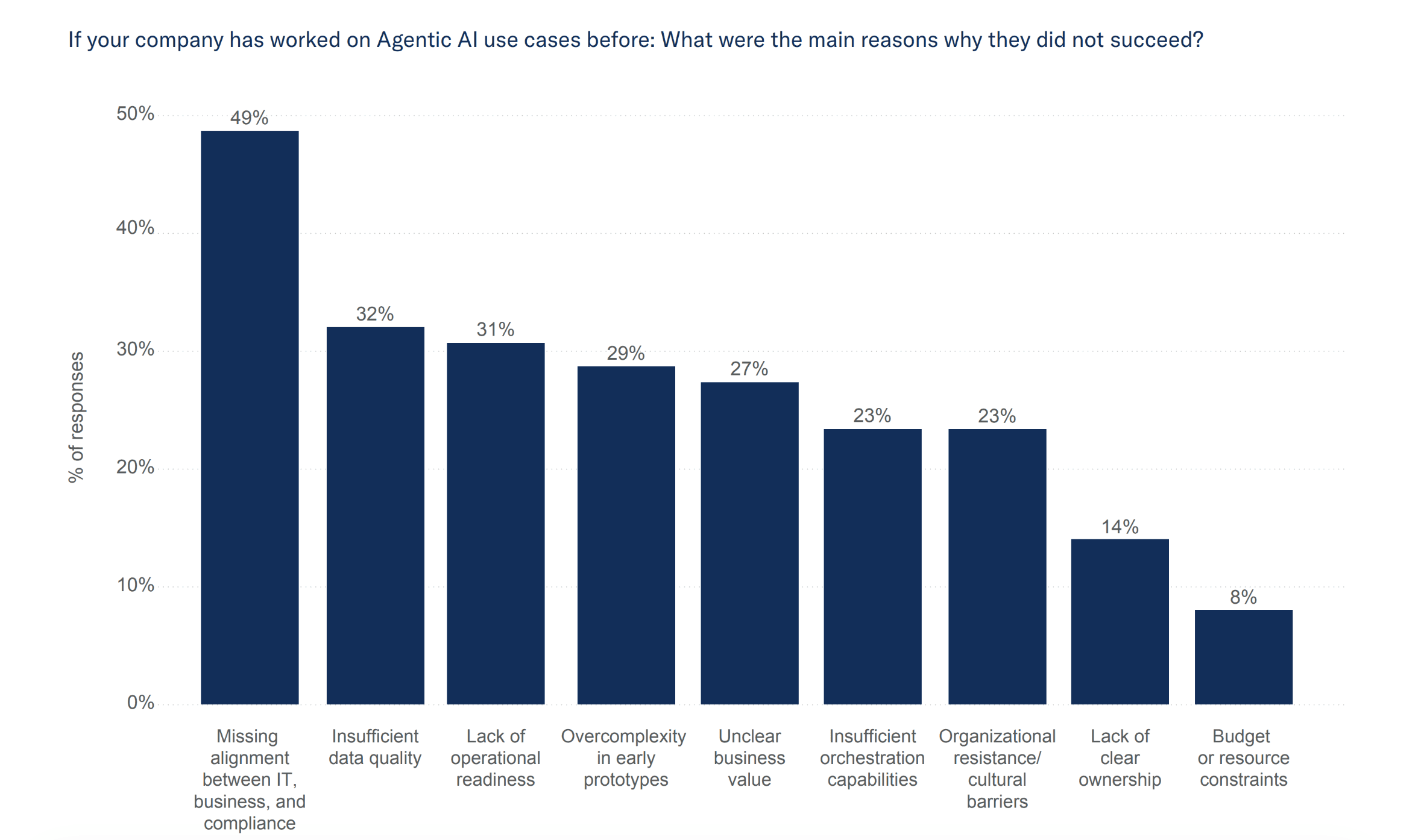

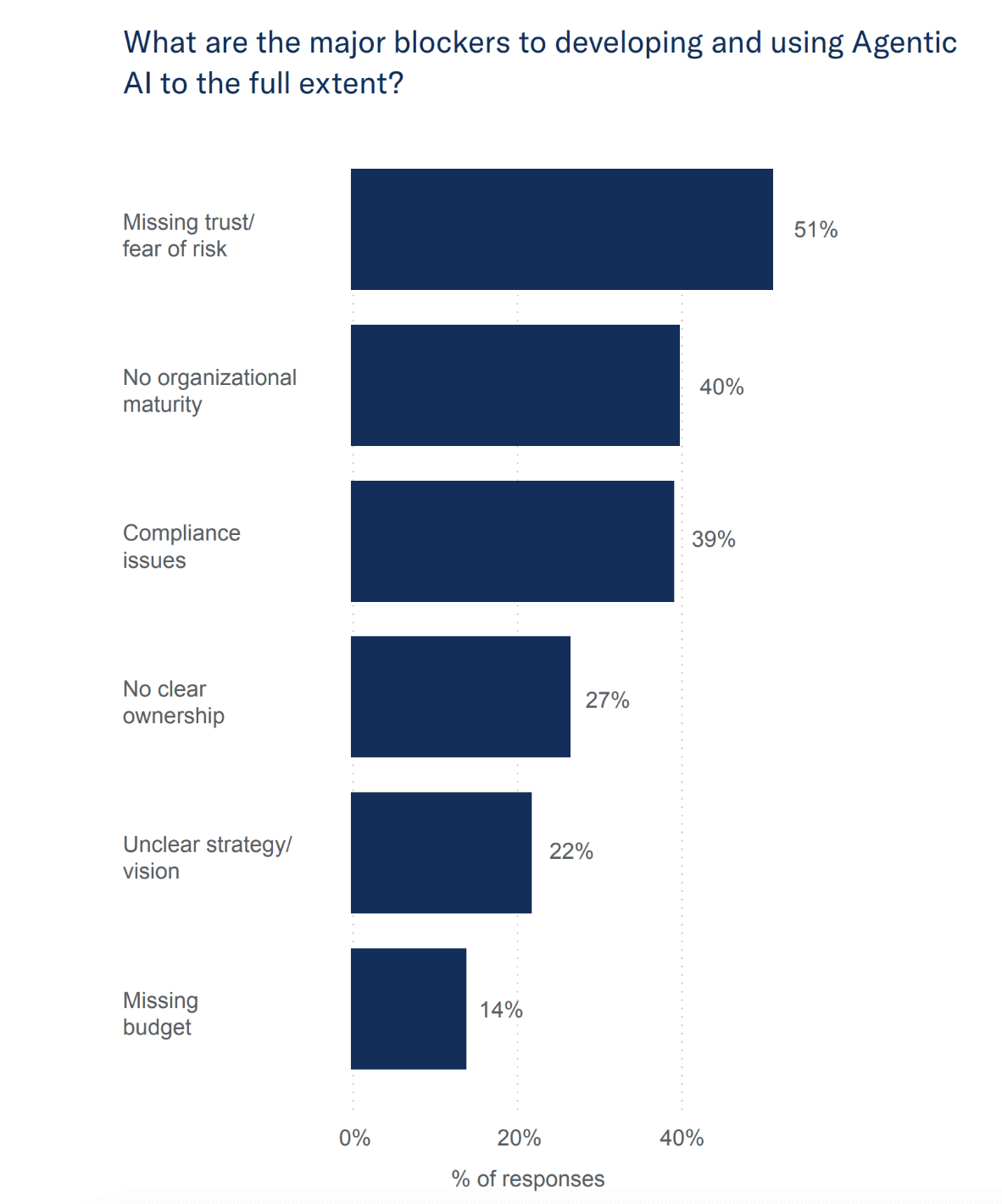

At first glance, this 75-percentage-point gap between conviction and execution seems to come down to the usual suspects: technology limitations and budget constraints. This, however, is not the case. As our study reveals, the gap can be traced to three organizational paradoxes that keep even sophisticated companies stuck in perpetual experimentation.

The companies that solve these paradoxes are building operational advantages that compound with every quarter, but it’s not a matter of being smarter or better funded than competitors. They’ve simply figured out what the majority haven’t: Agentic AI adoption is an organizational challenge disguised as a technical one.